Optimising TFS Builds

Recently I have been optimising TFS builds in the hope to reduce the time it takes to get and compile our solutions. It seems to have been very successful, reducing times from 2 hours to about 30 minuets. So I thought I would list the combination of things I have found in the hope it might help others reduce build times.

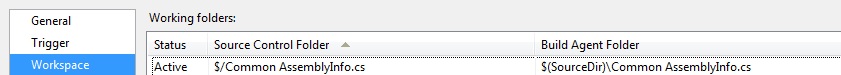

Reducing get scope of working folders

This took some time, as the build I was working on had a lot of source code it took a few hours to figure out what folders I needed. But by reducing the scope of the Get from TFS as much as possible and by fine-tuning the workspace mapping, I managed to shave a lot of time off. The idea is to only get what you need to build the current solution. I added Active Folders for the few folders that we needed for each build. But you can add the root of your source code and cloak folders that you do not need if its easier.

Scope individual files

All though the GUI doesn’t let you select individual files to Cloak/Get, you can in fact do it. In order to do this you just enter the paths manually to the individual file. For example…

This was very handy, as in some cases I only needed a single file in a folder. Especially as some of the files were in the source code root folder. Also some folders I needed the full source of had big unrelated files that I Cloaked.

Keep drives in order

Schedule disk defragmentation on your build agents – if you do continuous integration and nightly builds, it might be best to schedule defragmentation for the weekend. Just set Defrag.exe c: -f to run in a batch file. This rearranges files contained on the hard drive into a more logical order, and therefore reduce source code access times.

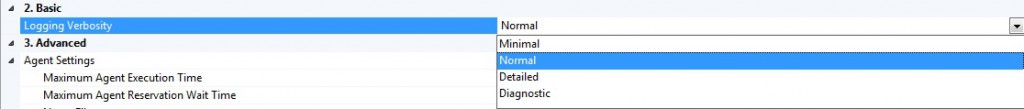

Reduce logging

By reducing logging verbosity you can decrease build times. But in my case I wasn’t able to do this, as our QA relies on the error messages to get the right developer to fix what went wrong.

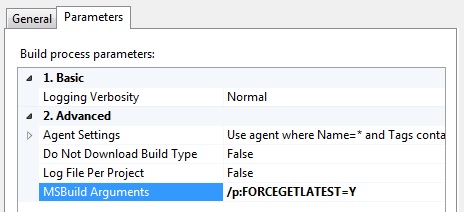

Get latest changesets

By far one of the best ways to optimise a build is to only get the latest changes. This reduces the Get time, and as your only getting the changes since the last build, it can have a significant impact on a large code repository. I also created a build argument that enabled us to either get the latest changes or clean the workspace and get the complete source code out again, I did this in case we later had any issues with references not getting updated, or if the workspace got in a muddle and the builds stopped functioning properly.

<PropertyGroup Condition="'$(FORCEGETLATEST)' == 'Y'"> <SkipClean>false</SkipClean> <ForceGet>true</ForceGet> <SkipInitializeWorkspace>false</SkipInitializeWorkspace> </PropertyGroup> <PropertyGroup Condition="'$(FORCEGETLATEST)' == 'N'"> <SkipClean>true</SkipClean> <ForceGet>false</ForceGet> <SkipInitializeWorkspace>true</SkipInitializeWorkspace> </PropertyGroup>

Then when starting a new build you can simply enter a MSBuild Argument to change the type of Get.

Incremental builds

Incremental builds only build components that have changed. Components that are still up-to-date are not rebuilt. To enable incremental builds (builds in which only those targets that have not been built before or targets that are out of date, are rebuilt), the Microsoft Build Engine can compare the timestamps of the input files with the timestamps of the output files and determine whether to skip, build, or partially rebuild a target. However, there must be a one-to-one mapping between inputs and outputs. You can use transforms to enable targets to identify this direct mapping. Its something that will save a lot of time during the build process, but can potentially cause a lot of headache if not configured properly. To enable incremental builds,you just need to edit the build definition in Visual Studio and set the Clean Workspace property to “None”.

Multiple build agents

If your build server is powerful enough, it might be work looking at having multiple build agents. Its a good idea to have CI builds run on a different agent, so they don’t get held up behind lengthier deploy builds. This is only practicably possible if you have a multi core processor, because the build agents will then run on different cores. The only downside to this is disk access, it seems to be a big bottleneck, so having a fast drive is essential to reducing the build times.

A final note

Not all of these methods will be applicable to everyone’s builds. Play about with some of the methods, but in my experience every so often the workspace needs cleaning down as TFS isn’t always perfect. Especially if a build gets interrupted you are likely to get an out of sync workspace. So its worth having a way of forcing a clean down and get latest, such as the method mentioned above. I personally would prefer clean builds all the time. As it prevents a certain class of errors from happening, but as we need a quick turn around on builds it isn’t practical to clean down after every build. As this is the case I plan of running a full clean on all nightly builds, its more involved and takes a long time, but its a good safety measure, and it doesn’t matter too much how long the nightly builds take. It may not catch an error immediately, but it would catch it by the end of the day making the number of check ins you have to evaluate for the problem more reasonable.